-

Case Studies and Projects15+

-

Hours of Practical Training80+

-

Placement Assurance100%

-

Expert Support24/7

-

Support & AccessLifetime

-

CertificationYes

-

Skill LevelAll

-

LanguageEnglish / Tamil

Why Choose a Hadoop course from Credo?

In this Big Data Hadoop Training, we offer live practical sessions on Data Engineering using SQL, NoSQL, Hadoop ecosystem. Our Professional Trainers ensure Classroom/Online Training to fulfill the advanced Hadoop training requirements with Hadoop Certification. Our Big Data Hadoop Certification Training in Chennai assists in project and career support using hands-on practices.

Find out what our past customers have to say about credo and their experiences with us

Flexible Mode of Training and Payment

Hear it from our customer!!

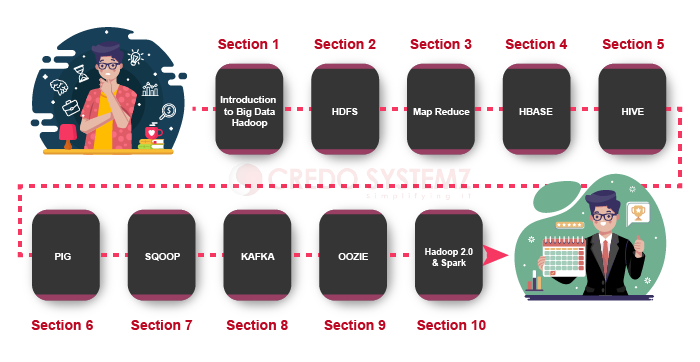

Our Hadoop certification in Training Overview

Credo Systemz’s Hadoop training course is designed by experienced experts who are also working in the top MNC companies. Our Hadoop certification provides practical sessions to gain real time experience and placement support.

Credo Systemz provides the best Hadoop certification online training with real-time Big Data Certified experts. It is a comprehensive Hadoop online training course designed by Big Data experts by current industry standards to help you learn Big Data Hadoop with real-time scenarios. Credo Systemz’s Hadoop online classes have been created with a full focus on the real-time projects of Big Data Hadoop. Big data Hadoop is a trending and highly valuable skill. This Hadoop online training will help you master in Big Data such as HDFS, Map Reduce, HBase, Hive, Pig, Sqoop, etc.,

Hadoop is the most widely used open-source software apache framework that allows storing and running a big data applications with its components. It allows multiple tasks to run with a single server without any time delay. Important Hadoop framework components are,

- HDFS

- MapReduce

- YARN

- HBase

- Oozie

- Hive

- Pig

- Spark

Hadoop is used to store and process a large amount of data easily and most of the big IT companies uses Hadoop for storage purpose. So Hadoop job opportunities increasingly for many Hadoop positions. Also, Hadoop used big companies are Google, Yahoo, IBM, and eBay, etc.. If you learn Hadoop Training surely you get Job in one of the best MNC’s.

Hadoop is an open-source, Java-based framework used for storing and processing big data. The data is stored on inexpensive product servers that run as clusters. Cafarella, Hadoop uses the MapReduce programming model for quicker storage and recovery of data from its nodes.

- For Processing Really BIG Data.

- For Storing a Diverse Set of Data.

- For Parallel Data Processing.

- For Real-Time Data Analysis.

- For a Relational Database System.

- For a General Network File System.

- For Non-Parallel Data Processing.

- Hadoop Distributed File System (HDFS).

Hadoop is not a mere framework in the Big Data world. It has a wide ecosystem with an umbrella of related technologies. For the same reason, a career in Hadoop is promising. If you have a good understanding of Hadoop fundamentals it will be a substance for great Career in Hadoop

- You can gain knowledge in Hadoop functions and its components in the current trending technologies.

- You can learn about imperative search concepts such as informed and uninformed search.

- Well known about the logical proof, planning, and constraints satisfaction in Hadoop.

- Finally, you will have the ability to apply Big Data Techniques for problem-solving and also to become a well-trained expert in Hadoop.

- Further, Best practices in building, optimizing and debugging the Hadoop solutions.

- We will conduct Hadoop Assessments and Mock Interview, so that we can evaluate candidate’s performance individually.

- In conclusion, an Overall understanding of Big Data Hadoop and be equipped to clear Big Data Hadoop Certification.

- Credo Systemz is one of the most prominent Hadoop training institutes that presents First Class Training in Chennai.

- Conducted Hadoop Online Training with well Advanced Learning Program.

- Well Experienced Trainers who are working in leading Top MNCs.

- Shape your learning path with customized skills in Big Data Hadoop.

- In addition, Guidance for Hadoop Developer Certification.

- Get Practical Knowledge with Real-time Hands-on Projects.

- Earn a Completion Certificate in Hadoop from Credo Systemz at the end of your training completion.

- First of all, Hadoop Online classes will help you to learn all concepts of the Hadoop Framework which is basic to advanced concepts.

- On begin, You can learn here from basic java concepts to Hadoop Frameworks.

- In other words, You can attend the Free Online Demo class with our Big Data Experts

- We are ranked as the Top 10 Big Data online certification training institute all over the world.

- On the other hand, trainees those who attending our Hadoop online training will get the recorded video for complete course.

- Providing Best Big Data Hadoop online training with Certification.

Big Data Hadoop is the top trending tools in Big Data industry. According to the Big Data survey, hadoop is the most used Big Data tool in the industry, its, growth increased more than expected ratio in the year of 2020.

Hadoop Online course from Credo Systemz offers the job oriented and real time project oriented Big data certification course with maximum number of real time datas. More than 90% organization takes Big Data expert in a top priority.

- In short, Credo Systemz is ranked as the best Hadoop online course in India and referred by our alumni in Quora, Google, Facebook and other social mediums.

- Big Data online course are handled by experts and experienced professionals via live online medium with practical and projects.

- To begin with Apache Hadoop course content plays a major role and covers the essential and various features of Big Data Hadoop.

- Initially our Hadoop training and placement online course covers the complete basics to assist the trainees the root concepts and includes the updated Apache Spark, Scala concepts as well.

- Hadoop online training with projects is one important factor which hikes up the skill set of the every trainee to clear Big Data Certification easily.

- In addition our Hadoop online training program includes the 100% placement assistance from our separate HR team.

- As a matter of fact trainees completed our Big Data Hadoop online certification program has ranked us as the best online training institute in India.

- Credo Systemz is ranked as the hadoop online training institute in Chennai with placement for both

- Velachery and OMR, according to the more number of positive reviews across the internet.

- Most Importantly hadoop training online course in Chennai velachery and OMR is handled by hadoop Professional Level Certified Trainers.

- In addition, we are providing the Online and Corporate Hadoop Training on tailor-made fees structure.

- For the most part, Our Hadoop Course Syllabus suits for both Beginners and Experienced Professional to enhance their skills.

- Our Hadoop Instructor has more than 12+ years of Industry experience. As a result, you can get updated and learn latest hadoop Topics.

- During the hadoop online course you will get fully hands-on experience in real-time projects which boost the confidence level in aspirants to face the real-time challenges successfully.

- We will conduct hadoop Assessments and Mock Interview, so that we can evaluate candidate’s performance individually.

- Also, we will guide you to complete hadoop online training in chennai which will help you to stand out in the market.

- In addition, our best hadoop online training you can also attend our free hadoop workshops and discuss with our consultant to know about the topics, case studies and real-time Hadoop projects that is included in this training program.

- Consequently you will receive Job alerts to your registered email and whatsapp from our placement team and also we are doing hadoop online course chennai in various ways.

Big Data Hadoop market report shares there will be significant growth in the year of 2020. The global market share of Hadoop has been increased all over the world including Asia, America, Europe, the Middle East, this results in more number of company growth and increase in the need for Hadoop Developers.

As an individual having Hadoop knowledge is very much required, Hadoop has also been listed as one of the important skillsets to have in 2020 according to Forbes 2020. Graphical representation of Hadoop Job opportunities given below,

- Business Analyst

- Big Data Engineer

- Data Analyst

- Hadoop Developer

- Hadoop Admin

- Database developer

- Hadoop Tester

- Machine Learning Engineer

- Data Scientist

NO HARD!

Basic knowledge of HTML, CSS and good knowledge of JavaScript can be very helpful if you really want to learn Angular. If you don’t have knowledge in JavaScript, no issue, our Angular courses begin JavaScript and other prerequisites. In 60+ Hours of course duration, you become an Angular Developer

Contact Us

+91-98844 12301 / +91-96001 12302

Training Benefits!!

We are the best provider of Big Data Hadoop Training in Chennai that focuses on extreme knowledge development about Hadoop concepts, architecture and applications along with big data. Likewise, Our Hadoop training in Chennai is well designed to gain knowledge with the complete training program from absolute scratch and reach up to expert level.

-

5-15%

5-15% Chances of immediate placements.

-

10-15%

10-15% increase in salary.

-

~30%

30% of job market is open for hadoop developers.

Testimonials

-

Credo Systemz Big Data Hadoop certification training is very effective to gain the respective skills. This Big Data Hadoop Course provides live training with hands-on practicals. Thanks to Credo Systemz.

Anu

-

I joined Credo Systemz Big Data Hadoop training to complete Big Data Hadoop certification using expert support. This Big Data Hadoop Course in Chennai provides standard training using the best trainers with a lot of practicals. Thank you Credo Systemz

Karthik

Join Us

CREDO SYSTEMZ provides the Best Hadoop Certification Online Training in Chennai to promote you into a skilled professional with 100% Free Placement Support.

Join NowHadoop Certification Online Course FAQs

Online session schedules will be flexible according to both trainees and trainers available timing. We have both weekend and weekday sessions available to learn according to your convenient timing.

Sure you can discuss with him at any when ever you have doubts. You will be having a separate Whatsapp group with your batch mates and trainers to reach them at any time.

“Write Less and Do More” Standardize the web application structure and also provides a future template.

- No need to learn another scripting language. Hence, It’s just pure JavaScript and HTML.

- Furthermore, Easy to use and Get Started in few Minutes.

- Supports MVC completely because of its structure.

- Also, A declarative user interface.

- In addition, Behavior with extending simple HTML DOM.

In order to meet the need of every individual, we used to offer different batch schedules like the classroom, online, corporate training, and fast track sessions. You can book your batch according to your needs.

You can contact us via +91 9884412301 / 9600112302 reach us via the contact form to book your seat in the upcoming batch for your Hadoop Big data training.

Yes, You can. Credo Systemz offers to you for installment payment via Cash, Cheque, Card, and UPI services.

Yes, Our Big Data training program is designed with real-time projects, case studies, and practicals. So you will surely work on live projects during your training period. Also, it is suitable for both beginners and experienced professionals which enhances yourself to give the confidence to become a Big data expert.

Our Hadoop instructor having 15+ years of experience in the IT industry. They are currently working as Hadoop in top MNCs.

Graphical representation of our course journey given below,

- Free Demo

- 100% Job Assistance

- Mock Interview

- Realtime Project

- Job Oriented Programming

Our Angular Course Duration is 60+ hours which covers all the modules of Hadoop Certification Course . In this duration, we will strengthen the more concepts, techniques, tools to practices and you will get various levels of Angular assessments. You have to work on a real-time Angular application Project.

Yes, we are providing 100% placement assistance. Credo Systemz helps the students with Professional resume building, various mock interviews, and group discussions training sessions for them to face the interview with confidence. So after the mock interview, we strive hard to improve your technical skill and interview confidence level. It acts as your promotion system until you get your dream job.

No hurries!! Credo Systemz allows you to select your preferred payment via Cash, Card, Cheque and UPI services.

To know about our exciting offers, concessions and group discount. Call us now: + 91 9884412301 / + 91 9600112302.

Feel free to enquire more. Mail us info@credosystemz.com or Call us now: + 91 9884412301 / + 91 9600112302.

Our Alumni Work in top MNC’S

Credo Systemz has placed thousands of students in various top multinational organiation, witnessing the progress of our alumni gives us immense gratification.

Join the success community to build your future

Enroll nowGet Industry Recognized Certification

Credo Systemz’s certificate is highly recognized by 1000+ Global companies around the world.Credo Systemz’s Hadoop certification shows the skill set of the aspirants with global recognition.

Benefits of Hadoop Certification online

- To demonstrate the skills and knowledge of Big Data Hadoop.

- To add weightage to the resume

- To stand out in the cloud job market with guaranteed successful careers.